Feldexperiment

Background

From 6 July 2022 to 17 January 2023, we contacted 1,206 authors who published research articles based on data from the European Social Survey between 2015 and 2020. This was done as part of the Priority Program META-REP, funded by the German Research Foundation (DFG).

META-REP is a meta-scientific program to analyse and optimise replicability in the behavioral, social, and cognitive Sciences. With 15 individual projects and 50+ scholars involved, META-REP seeks to answer fundamental questions about replicability.

Our project's first aim was to asess the code sharing rate among social scientists and to test whether simple interventions in our request's wording may alter authors' behaviour.

Research Design

The e-mails we sent were part of a field experiment on code sharing behavior. To investigate the determinants of researchers' willingness to share code, we experimentally varied three aspects of our request's wording:

- Framing of the project (2 levels)

The positively worded version of our e-mail stated that researchers' cooperation would enhance the quality, relevance, and success of social science research. The negative version portrayed our project in light of the replication crisis and its ramifications for the credibility of social science research. - Appeal of code sharing (4 levels)

The baseline version of our request did not include a specific appeal why researchers should share their code. Other versions stated that by sharing code, researchers would either...

- ...honor the FAIR Guiding Principles developed by Wilkinson et al. (2016).

- ...commit an act of academic altruism.

- ...increase their chances of being cited as part of our replication attempt.

- Perceived effort (2 levels)

The reduced effort version of our request emphasized that authors were not expected to clean their code before sharing. This note was absent in the baseline request.

Preliminary Results

Overall, 385 of 1,028 eligible authors responded positively to our e-mail and shared their research code. This corresponds to a sharing rate of 37.5%, which is largely in line with previous findings from other disciplines on data and code sharing. Note, however, that this estimate might not fully capture "true" code availability for two reasons: First, authors might have uploaded their code to a public repository but ignored our request. Second, a small fraction of replication packages is currently pending, meaning that authors might still deliver their code.

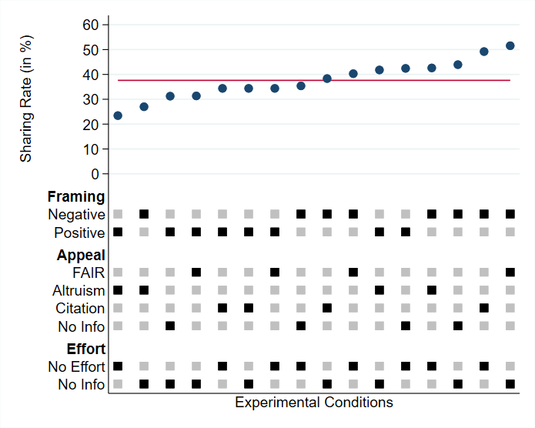

The figure above breaks down code sharing rates by experimental conditions. Using the logic of a specification curve, the plot's upper panel displays the effect size (i.e., the sharing rate) per condition, while the lower panel indicates the presence or absence of each treatment component in the respective condition.

Contrary to our hypotheses, neither the appeal nor the effort treatments influence authors willingness to share research code. Framing, however, does: Presenting our project in light of the replication crisis leads to an increase in code sharing. Five out of six conditions with the highest sharing rates used a negative framing. Conversely, six out of seven conditions with the lowest sharing rates used a positive framing.

Acknowledgement and Next Steps

We are deeply obliged to the many researchers who supported our project and went to great lengths to share their code with us. As stated in our request, we will utilize the code provided to facilitate a large-scale assessment of the replicability and robustness of social science research. If you would like to stay up to date on the progress of our project, please visit our website and follow the official META-REP Twitter account.

Contact

Should you have any further questions or concerns, please do not hesitate to get in touch with us via D.Kraehmer@soziologie.uni-muenchen.de.

A PDF version of this report is available below.

Downloads

- report_cts (180 KByte)